SAAS DISCOVERER

One tool to untangle the spaghetti of your legacy system.

One tool to identify the obsolete parts of code.

One and only tool you need to decide what happens next.

WHAT IS DISCOVERER (d8r)

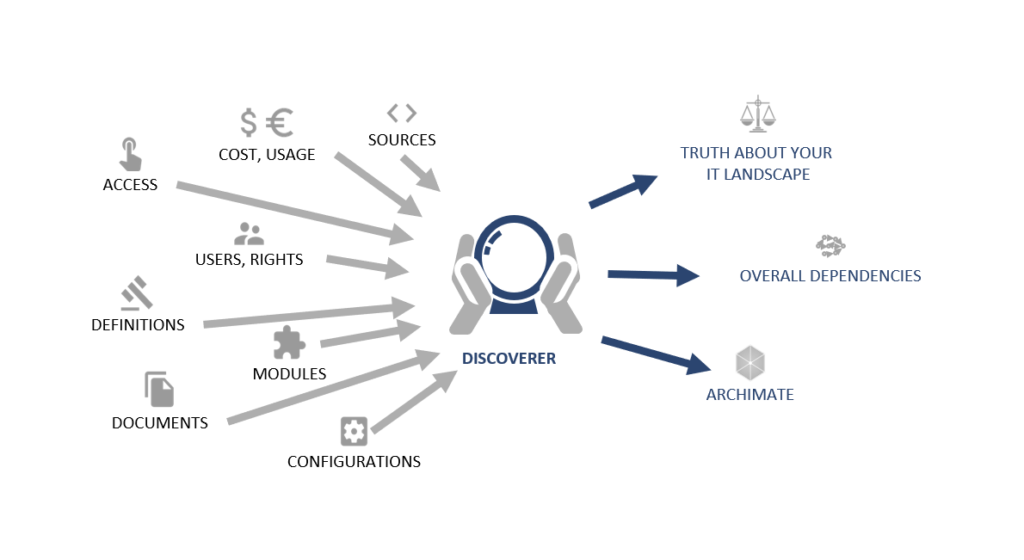

An enterprise-level framework and platform for real-time architecture enablement of cross-language assets. Load, enable, maintain and visualize assets across multiple programming languages and find all the dependencies hidden behind the source code, schedules, jobs, scripts, macros and other assets necessary to run your enterprise portfolio. Discoverer will help you gather data and analyse dependencies, help with impact analyses, save time on root cause analyses and enable visibility each time a new RTP is processed.

You will learn about current and potential issues without delay and benefit from our time-tested professional development and support.

Via usage data from your system, it allows you to discover other dependencies in “forgotten” assets, discover obsolete functionalities and open the door to a new way of optimizing your architecture.

Give yourself the tool necessary to enable crucial visibility across multiple programs and improve the quality of your services without sacrificing operating costs.

WHY DISCOVERER?

THE REAL SOURCE OF TRUTH

Do you trust your documentation? Is it outdated, antique or simply passé? Do you know how it was deployed, configured? The only truth is in the source code, schedules and configurations, and it is ever changing. You need to go to the real sources to see what is behind your IT.

USE, VALUE, COSTS

Do you know where the money goes? Do you know which report calculates the whole night but no one ever reads it? Are you fine with decommissioning an application but the data are used everywhere in end-user computing?

Connect use, value and costs to real application assets to see where to put your effort.

A CRYSTALL BALL

Have you ever wanted to know what is hidden or kept secret from you? Discoverer is a crystal ball you can use to look into your IT landscape. With Discoverer, you can see all dependencies and make decisions based on real data and relations that are always up to date.

CONNECT ENTERPRISE ARCHITECTURE

Are your enterprise architects talking about how your enterprise was years ago, or they have no idea at all about its AS-IS state? Did they finish an expensive EA project today that is already outdated?

They need to connect their results to the real assets used to build the enterprise instead of using static boxes without any link to the real world.

WHAT CAN DISCOVERER DO?

Analyze program source code, configuration files, deployment scripts, databases and usage data

HOW?

By loading it, parsing it, understanding what it does and indexing the full-text parsed outcomes.

RESULT

Discovery of the dependencies and relations to the applications.

AND WHY IS THIS ONE DIFFERENT?

BASED ON REAL NEEDS

Discoverer was not created artificially. It is based on real needs and years of support and architecture work done by our team members. From the support point of view, it reduces outages to a fraction of the time, and most importantly it eliminates dependency on knowledge hidden in the heads of support staff. The architecture outputs supported by real assets are a living work that provides long-term value for EA.

COMPREHENSIVE

By combining source code, scheduler definitions, configurations, deployment scripts, usage, rights, data definitions, documentation and architecture diagrams, it provides a unique comprehensive solution capable of unravelling your IT spaghetti. Users can start with architecture and business services and dig deeper to realization, or they can go the opposite direction from code to business knowledge.

OPEN TO THE WHOLE ENTERPRISE

Discoverer’s users are not limited to a small number of people in a “strange” IT department. We focus on everybody connected to IT, even end users. Of course, not all of them are direct users, but the reports and architecture assets extracted from the tool provide value to everybody in the enterprise.

EXTENDABLE

Discoverer has been made as a framework from the beginning. We are not limited to a particular language, script, database or scheduler. In the end, users are able to load everything into the tool and see the overall IT landscape—from a well-structured mainframe to modern edge technologies.

PRODUCT FEATURES

Dashboards

Structure visualization

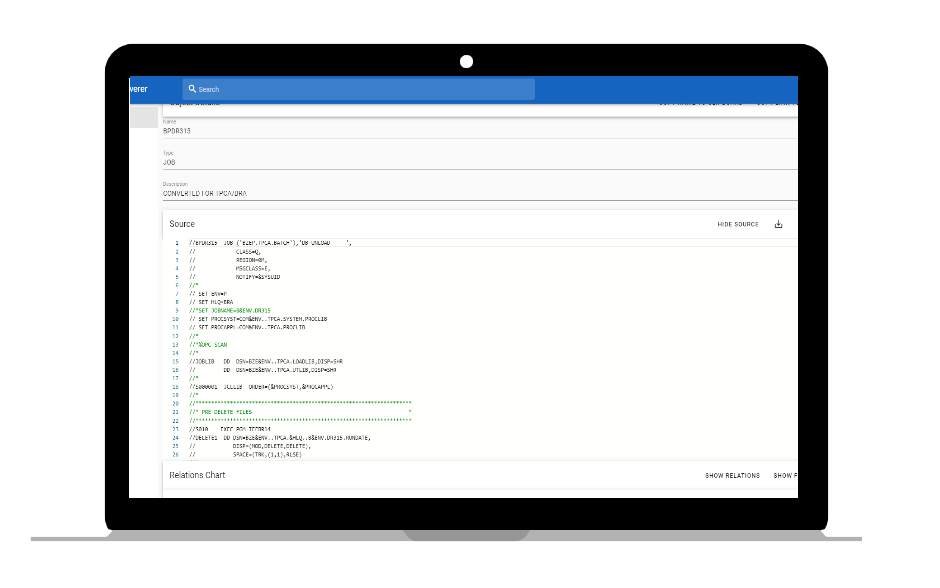

Show names, attributes, links to other assets and source code.

Usage visualization

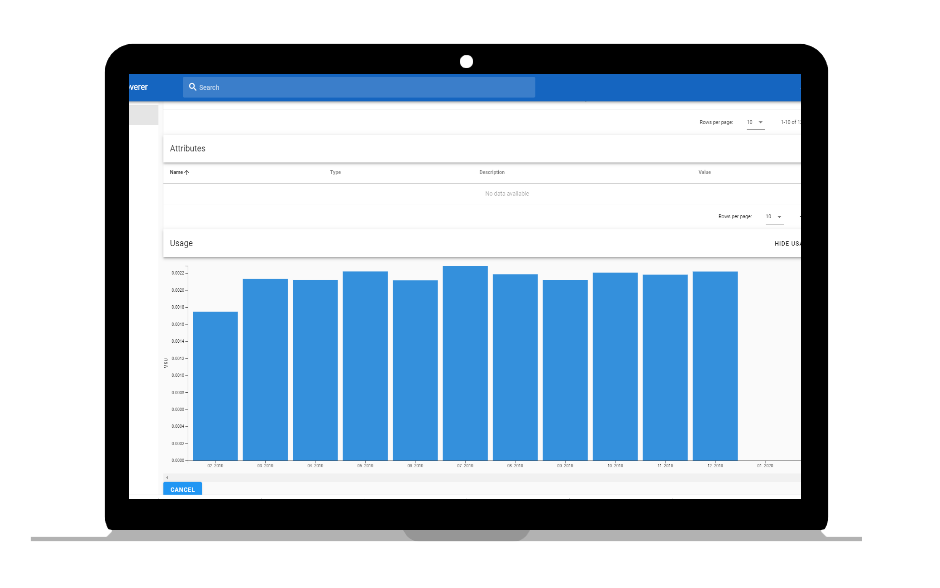

Show basic usage information—what has been accessed and when.

Security & instance control

Analytics – Zombie code

Zombie code is not as easy to detect as dead code. It is code that could be executed, but that has not been happening—either users do not use it, or the conditions to execute it will be never met. This code is like a real zombie—slowly walking, eating your brain (code base) and killing your support (you always have to investigate zombie code and check if it has played a role in any problems).

Analytics – Dead code

Dead code is a section of source code in a program which is executed but whose result is never used in any other computation. The execution of dead code wastes computation time and memory. While the result of a dead computation may never be used, it may raise exceptions or affect a global status. Thus, the removal of such code may change the output of the program and introduce unintended bugs. Compiler optimizations are typically conservative in their approach to dead code removal if there is any ambiguity as to whether the removal of the dead code will affect the program output. The programmer may aid the compiler in this matter by making additional use of static and/or inline functions and enabling the use of link-time optimization [as defined at https://en.wikipedia.org/wiki/Dead_code]. Usually, dead code is identified within one source file. Discoverer looks for broader dependencies and searches for missing linkages between assets to find dead code.

Usage analytics

Based on the asset name provided, the Discoverer usage module allows you to browse the asset usage. Advanced features offer more concrete queries such as: Is this file used? Is this table read externally?

Advanced search

Search

Conducts a standard search for assets by name or by content.

Advanced search

Advanced search offers basic functions and advanced search options—objects, processes and screens and integrates them into a complex visualization of data and information.

Full-text search

Advanced full-text search using wildcards.

Combined search

The combined search is a configurable module enabling you to search across systems, tables, attachments, drafts, tags, comments, metadata and (most) everything in-between.

Full-text advanced

Full-text search is a more advanced way of searching a database. Full-text search quickly finds all instances of a term (word) in a table without having to scan rows and without having to know which column the term is stored in. Full-text search works by using text indexes.

Reporting

Extract the application decomposition document—in Excel format with the following details.

- application ->JCL->PGM->Table/File

- IMS screen -> PGM->Table

Change impact analyser reports—what I need to change if I change this file, this table…

Report asset internal logic—for example, flow of the program, step dependencies.

Exporter

The ArchiMate exporter helps the user seamlessly export all assets and object references to be integrated with ArchiMate.

Additional features provide functionality that makes it easier to recognize objects newly added to or removed from ArchiMate. Newly added elements are marked by versioning or deleted elements (to be removed from diagrams) and highlighted broken collaboration.

Other features help you generate diagrams utilizing a modern graphical layout and update existing diagrams that make it easier to spot changes which are color-coded.

The Neo4j graph database in a Docker container integration helps you automatically transfer objects to this platform and visualize all references available in Discoverer.

The advanced version helps you select the specific attributes that should be exportable directly to Neo4j.

Combined export will help you export the selected data from ArchiMate and Discoverer.

The universal export function helps you export objects in a standardized and documented format such as Excel, CSV, etc.

This unique export helps you generate the necessary assets for other tools outside of ArchiMate—EA—Sparx Systems.

Duplicate Code detector

The module searches the code and tries to identify identical pieces of code at the file level.

The module searches the code and tries to identify identical pieces of code utilizing full-text search.

The module searches the code and tries to identify identical pieces of code utilizing AI to recognize parts of the functionality of a program, not only text search or code similar to that written on a text basis.

Settings

The settings section allows you to configure the application, manage entities—for example, attributes for OPC—manage instances on-premises or in the cloud and manage multiple servers if utilized while loading the data.

DETAIL INFORMATION

Source code repository

Inventory

Inventory analytics summarize the key information related to the inventory of assets, overview and possible filtering of assets throughout the repository. The advanced version includes usage heat maps, allowing you to visualize usage heat maps that help you identify how critical a potential change or asset is and prioritize on a root cause.

Dependency browser

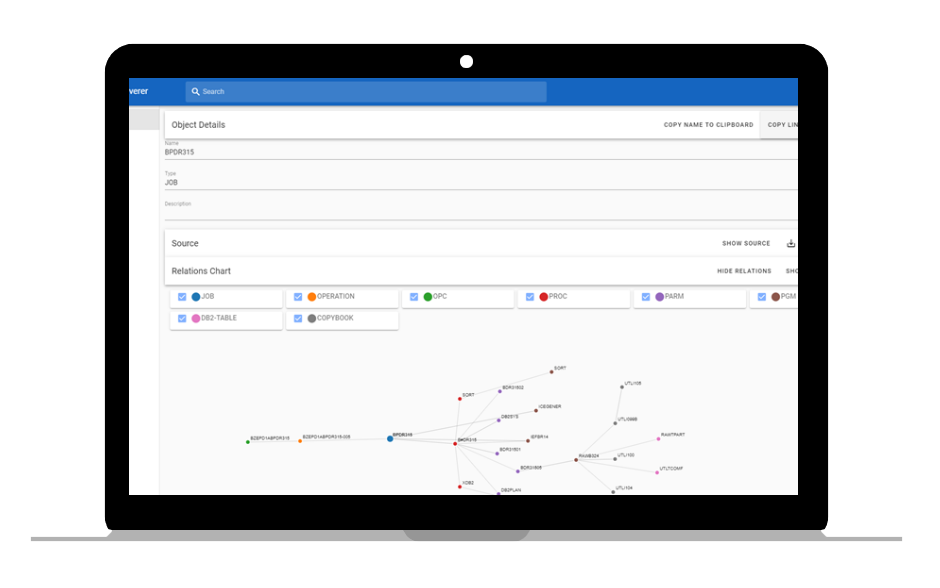

Via the dependency browser, you can explore datasets that have been loaded during initial or incremental data loads and discover all the dependencies between various objects. You can configure the browser to visualize data in the current data set or utilize it through multiple datasets if configured.

Advanced functions allow you to configure multiple levels of filtering in the dependency browser to limit the number of results, e.g. skip some levels or show only selected types. The dependency impact analyser helps you identify the size of an impact if a change to the code is planned and helps you automatically identify all the areas that will be impacted, which need to be considered when planning the change.

When used in addition to the change impact analyser, the functionality shows you the dependencies including the usage data. This will help you asses the size of the impact and answer the questions of if and how many users will be impacted and when the program is usually executed when planning a change.

Resource consumption

Module usage provides a generic framework to load and store data loaded from dynamic sources—mainly centered around the usage of a system and the associated costs. This functionality supports data from multiple data sources such as Windows file system, Linux, FTP and ODBC, and as part of its internal processes, it normalizes data (using the same name structure as in Discoverer), groups them (keeping only grouped data per day) and simplifies them.

ONBOARDING PROCESSES

Source code onboarding

Automated asset import

The dependency extractor will help you semi-automatically load data to Discoverer. The assets supported include TWS schedules, JLCs, PL1, Cobol programs, IMS screens, procedures and import tables and their structures, either from DDLs or their realisation in the DB.

Generic asset import

Allows you to import any other information in a generic file format in XML or CSV structure—for example, job, program description or generic assets and their linkage (programs without sources and so on).

Manual asset editing

Manual asset linkage enables you to load dependencies directly to the database either directly from the tool, if the reporting is insufficient, or from other sources (XLS, CSV, manual load through www). This enables you to add assets that are not automatically exportable from your system.

Rights management onboarding

User right links are extracted from PARAM files allowing the end user to obtain links between users, groups, IMS screens and applications. The extractor helps map the links that are related to DB tables. This information helps you visualize which programs ha cve what type of rights and could possibly influence data or write to a table with relevant data sets. Discoverer reads the PARAM files, extracts data from the repositories and usually gathers data using FTP, Git, or rsync. The final source files are stored in Git and used by the parser (as files in a directory).

Rights import

Allows you to import information about user and program rights—therefore allowing you to see who can change or consume a particular piece of data.

Load extractor

The load extractor guides you through the necessary steps to execute the scripts in your environment, helping the administrator collate the relevant data by executing scripts pre-made for this activity. The scripts are ready to extract only the relevant information that is necessary for Discoverer to work properly. The loaded resources are stored in a cloud or on-site secure environment and after validation are processed by a pre-parser for further validations. A logging mechanism can trigger an event that can be traced by an external monitoring tool if necessary.

PROCESSES RUNNING IN THE BACKGROUND

PROCESS FLOW

PRE-PROCESSOR

The internal automated pre-processing engine helps clean out program animalities and makes special fixes. For example, you can comment out inline instructions, remove some files or add advanced error logging including enabled integration to an external monitoring system.

PARSER

The parser module parses source code to get the internal logic, either using the generic tools available (DMS from Semantic Designs, ANTLR, Xtext) or custom-written parsers. The output is abstract (not concrete) syntax trees of values.

COMMENTS EXTRACTOR

The extractor is the parser component that enables the export of source code comments and prepares them to be loaded and parsed as meaningful textual documents.

HIGH-LEVEL DEPENDENCY EXTRACTOR

Extracts high-level structures of calls (for example, procedures and how they are called), linked files, defined variables and other procedural parameters. The dependency extractor considers relationships only after the source has been parsed. Based on manual input, it provides manual values for external variables (for example, OPC variables). This enables the visualization of various data layers, allowing you to see information that has been manually created or deleted by users.

CASE STUDIES

Still not sure if Discoverer is the right solution for you? Never mind! We’ve put together case studies to show you how the solution works.

What’s behind the spaghetti?

Use Case:

How Discoverer can help in IT support

Discover the root-cause analytical tool that will help you get to the bottom of the situation when trying to find out, “Why did this or that happen in a system?”

What’s behind the spaghetti?

Use Case:

How Discoverer can change impact analysis

Discover in almost no time the real size of the impact if you change one application, object, parameter, setting or database size/structure.

What’s behind the spaghetti?

Use Case:

How Discoverer can change impact analysis

Get rid of unused parts of your legacy system. Without the risk of unwanted changes, yet with the prospect of reducing support costs. Meet Discoverer.

TECHNOLOGIES

Velocity calculator

Calculates the number of changes and their size to assign a velocity number. The velocity number helps identify the complexity of the planned implementation.

Vocabulary creator

Extracts the verbs and variable names used and forms a generic vocabulary used in the code.

Additional asset attributes

This function allows the display of additional attributes such as program value (based on inherited logic), complexity, number of lines, number of comments and file size.

Identify dead code within one file

Look for dead code in a single file.

Rewrite calculator

Helps define and design the budget necessary to rewrite a function by counting function points and estimating rewrite costs.

Validate programming rules

Runs various checks against the source—for example, JCL is procedure-based, the comments are readable.

External tools – infrastructure

Standard SW – ELK stack – ElasticSearch database

Standard SW – ELK stack – Filebeats, winlogbeats and metricsbeats to collect data

Standard SW – ELK stack – Kibana – search and dashboard interface

Standard SW – ELK stack – Log collecting and indexing

Standard SW – PostgreSQL – Relational DB

Standard SW – QlickView – Analytical services

Standard SW – Scheduler SW – Scheduling

Standard SW – Zabbix – System monitoring

Standard SW – neo4j – Graph database